With the recent release of video footage of the Uber accident in which their AV collided and killed a pedestrian walking a bicycle, we are able to reach some initial conclusions concerning this unfortunate and avoidable incident. This analysis is based on released Uber camera footage, Uber’s deployed sensor equipment, and experience with current AV system behavior.

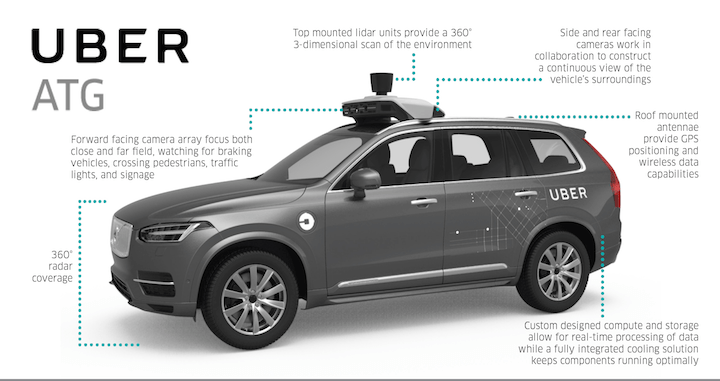

PERCEPTION From reviewing the video, we see this pedestrian (“Ped”) crossing perpendicular to traffic Lane-1 to Lane-2 in the dark, and the ultimate collision in Lane-2. Uber’s fleet utilizes Lidar onboard, using a version of Velodyne’s model HDL-64. This unit provides 360 degree coverage from its central viewpoint, with a viewable distance of 120 meters (~400 feet). With the roof mounted position (~80” from ground) and distance capability, reliable object detection even half  that distance is achievable. And to clarify Lidar capability, this is in complete darkness with no visible illumination required. Given the Ped originated in the median and proceeded from Lane-1 into Lane-2, we have an object that is moving with a trajectory into the vehicle’s planner path. Uber’s AI should have detected this Ped as an object in motion towards the vehicle with a possible threat of collision. In addition, Uber claims to have 360 degree Radar coverage, however we question the coverage radius, elevation, and at what range. Given the current Uber fleet, we have no recognizable visible lower Radar units (could be hidden), and we have not been able to confirm any Radar in the roof sensor rack.

that distance is achievable. And to clarify Lidar capability, this is in complete darkness with no visible illumination required. Given the Ped originated in the median and proceeded from Lane-1 into Lane-2, we have an object that is moving with a trajectory into the vehicle’s planner path. Uber’s AI should have detected this Ped as an object in motion towards the vehicle with a possible threat of collision. In addition, Uber claims to have 360 degree Radar coverage, however we question the coverage radius, elevation, and at what range. Given the current Uber fleet, we have no recognizable visible lower Radar units (could be hidden), and we have not been able to confirm any Radar in the roof sensor rack.  Therefore with Radar capability properly sensor fused with Lidar data, the Ped should have been detected even in Lane-1 at the reported 38 mph speed, and at least 150 feet distance. The Uber-AI would have had the opportunity to slow and/or brake to better understand the moving object approaching the vehicle in the adjacent lane.

Therefore with Radar capability properly sensor fused with Lidar data, the Ped should have been detected even in Lane-1 at the reported 38 mph speed, and at least 150 feet distance. The Uber-AI would have had the opportunity to slow and/or brake to better understand the moving object approaching the vehicle in the adjacent lane.

There has been discussion concerning challenges in “object classification” in this situation, given it was a person walking a bike with bags, and whether the Uber-AI was confused on what the object was. If the AI is unable to classify an object, should it just ignore it? Was this stretch of roadway designated to exclude possible pedestrians or foreign objects (FOD) in this area? This is sometimes done on highways to reduce the sensitivity and eliminate excessive jerky braking/steering application for small miscellaneous items like plastic bags and small road debris. However this is a low-speed 35 mph zone. Is it possible that in Uber’s software

pedestrians are only tracked while in dedicated cross walks, or bikes only in bike lanes? I hope not. We must be prepared for any possible scenario; pedestrians and bikes should be monitored in all areas, just like other objects that could come into conflict with travel. If there was any confusion as to what the Ped was in this incident (a pedestrian and a bicycle together make for a larger single detectable object), brake application should have been the first response given the larger object size obstructing the traveling lane. From the above picture, the lane obstruction of the Ped was at least 50%, and constitutes a lane blockage. The question is why the Uber-AI did NOT determine the Ped as a collision factor early in Lane-1 and slow/brake, or emergency brake or do an evasive lane change when the Ped was directly in front in Lane-2? (To date its been reported that no brake application was made until after collision.) The deeper issue is the claimed Lidar and Radar coverage of the vehicle: 1) are the sensors fused properly and detection distances optimal, 2) are the sensors properly calibrated, and 3) are the objects detected crossing the planner path properly detected as collision potentials. We would need to see Uber’s propriety UI view to get the full story of the software’s perspective on the surrounding objects. (No such release has been made as of this writing.)

Tempe police stated, at the time of this writing, that the victim “came out of the shadows and was difficult to see.” From a human’s visual perspective, this is correct. However, from an autonomous vehicle’s perspective with Lidar and Radar onboard, this is not accurate. A properly configured AV has the capability to see BEYOND typical human  sight, therefore we do not need to be dependent solely on headlight illumination. Although current hi-res camera detection is reasonable in lower lighting conditions (<10 lux), passive cameras are no-match for Radar and Lidar capability for object detection in darkness. This understanding of how enhanced AV sensing capabilities, over humans drivers, require a shift in fundamental understanding needed to comprehend the advantages of AVs. Government, legal, and insurance institutions will need to better understand how safe these technologies can be if they are used and calibrated properly. They will need to adjust to these new expectations of AV systems to further protect the public. Do we need stricter regulation now? That’s for another post.

sight, therefore we do not need to be dependent solely on headlight illumination. Although current hi-res camera detection is reasonable in lower lighting conditions (<10 lux), passive cameras are no-match for Radar and Lidar capability for object detection in darkness. This understanding of how enhanced AV sensing capabilities, over humans drivers, require a shift in fundamental understanding needed to comprehend the advantages of AVs. Government, legal, and insurance institutions will need to better understand how safe these technologies can be if they are used and calibrated properly. They will need to adjust to these new expectations of AV systems to further protect the public. Do we need stricter regulation now? That’s for another post.

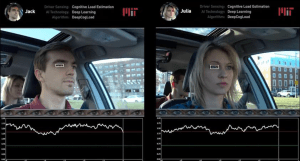

SAFETY DRIVER The purpose of safety drivers are to ensure the vehicles in development are acting safe and are performing as human drivers or better. The expectations are that those acting as safety drivers need to be even MORE conscience and aware of their surroundings as they shoulder the weight of not only their personal safety, but of those people and property around the vehicle, the company for which they represent, and the AV industry as a whole. In this situation, we see the Uber safety driver

is clearly distracted by something in the lower driver-right of the dash. The eye movements are continuously shifting down and at times pinned to that lower non-driving visual plane. We are not sure if the driver is focused on the center dashboard screen or a cellphone, but the driver’s focus is shared between driving and something else. Distracted driving by a safety driver can not be tolerated, given this event. Given the location where this occurred, the driver should have been more vigilant in scanning the darkened road and focussed OUTSIDE the vehicle. The driver could have had the opportunity to brake and swerve to avoid the collision. But without safety drivers continuously focusing outside the vehicle, we will continue to see potentials for collision until AV systems can be taught to work in highly dense un expected pedestrian environments such as downtowns, campuses, and business parks. A static object in the median is one case, but a moving object from the median into the vehicle’s travel lane is a more critical scenario to attend to sooner rather than later. In this case, the last safety net (the safety driver) was not effective given the gap in this L5 platform.

expected pedestrian environments such as downtowns, campuses, and business parks. A static object in the median is one case, but a moving object from the median into the vehicle’s travel lane is a more critical scenario to attend to sooner rather than later. In this case, the last safety net (the safety driver) was not effective given the gap in this L5 platform.

Given the rapid development of AVs towards L4/5 driverless mode, we may need to take a more conservative approach to ensure vehicles can operate in un-expected “edge” cases. Although the testing and development costs may rise, implementation of a safety driver attentiveness tool, similar to those being developed for eye motion, should be utilized to track safety drivers to ensure they are focused as necessary to be properly prepared to intervene as necessary. This has been the issue with L3 vehicle

development and the “handoff conundrum” we have all been grappling with. In these situations where safety drivers are needed to operate AVs, the vehicles are technically L3/4 vehicles that will require human intervention during operation. Therefore, we can learn from this situation when it comes to the handoff and what can happen if you are distracted at 40 mph at night, versus 75 mph on a freeway. Different levels of human interaction are necessary during testing and we will need to bridge this human gap as soon as possible while the AI is training towards L5 capability. But until we have accumulated millions of miles per platform in all conditions, we will need to address the driver attentiveness issues to eliminate future incidents.

SUMMARY Given this unfortunate distinction as being the first self-driving car casualty for our industry, we must all carefully learn from this incident. In this case, the fault resides in two distinct areas: 1) Sensor/AI false-negative object detection resulting in non-evasive maneuvering, and 2) distracted driving by the safety driver.

This situation is not uncommon: pedestrians crossing the road in non-designated areas. If anyone has driven in more dense environments such as downtowns, crowded campuses, and other highly populous geographies such as China and India, this unexpected pedestrian crossing is a common occurrence even at night. AVs will need to be vigilant and leverage their advanced capabilities to see beyond human sight, understand the objects they recognize (even un-classified ones), and act accordingly to save pedestrians and passengers alike. Those are the advanced capabilities that will allow us in the AV industry to drive deaths and accidents towards zero, and the culmination of resources we are all engaging towards this mobility effort. A single death, even while developing these technologies, is unacceptable and must be clearly understood by all participants as to why they occurred.

We ask Uber to further share their AI data related to this incident to the AV community so we can all learn from the gap.

Written By: Marcus Strom, Founder, ADR

Lets see some more good work in progress.

LikeLike